Dr. Danyal Fer sat on a stool a few feet from a long-armed robot, wrapping his fingers around two metal handles near his chest.

As it moved the handles – up and down, left and right – the robot mimicked every little movement with its own two arms. Then, when he squeezed his thumb and forefinger together, one of the robot’s tiny claws did the same. So surgeons like Dr. Fer has long been using robots to operate on patients. You can withdraw a prostate from a patient while they are sitting at a computer console in the room.

After this brief demonstration, Dr. Fer and his colleagues at the University of California at Berkeley how they hope to advance the state of the art. Dr. Fer let go of the handles and a new kind of computer software took over. As he and the other researchers watched, the robot began to move on its own.

With one claw the machine lifted a tiny plastic ring from an equally small pen on the table, passed the ring from one claw to the other, moved it across the table, and carefully hooked it onto a new pen. Then the robot did the same with several more rings and completed the task as quickly as it would under Dr. Fer.

The training exercise was originally designed for people; By moving the rings from pen to pen, surgeons learn to operate robots like the one in Berkeley. According to a new research report from the Berkeley team, an automated robot performing the test can match or even outperform a human in terms of skill, precision, and speed.

The project is part of a much broader effort to bring artificial intelligence into the operating room. Using many of the same technologies that support self-driving cars, autonomous drones, and warehouse robots, researchers are also working to automate surgical robots. These methods are still far from everyday use, but progress is accelerating.

“It’s an exciting time,” said Russell Taylor, a professor at Johns Hopkins University and a former IBM researcher known in academia as the father of robotic surgery. “This is where I was hoping we would be 20 years ago.”

The aim is not to remove surgeons from the operating room, but to reduce their burden and possibly even increase the success rate – where there is room for improvement – by automating certain phases of the operation.

Robots can exceed the accuracy of humans for some surgical tasks, such as inserting a pen into a bone (a particularly risky task with knee and hip replacements). The hope is that automated robots can perform other tasks like cuts or sutures more accurately and reduce the risks associated with overworked surgeons.

During a recent phone conversation, Greg Hager, a computer scientist at Johns Hopkins, said that surgical automation would advance much like the autopilot software that guided his Tesla while talking on the New Jersey Turnpike. The car drove alone, he said, but his wife still has her hands on the steering wheel if something goes wrong. And she would take over when it was time to get off the freeway.

“We can’t automate the whole process, at least not without human error,” he said. “But we can start developing automation tools that make a surgeon’s life a little easier.”

Five years ago, researchers at the National Children’s Health System in Washington, DC, developed a robot that could automatically sut up a pig’s intestines during surgery. It was a remarkable step in the direction of Dr. Gaunt envisaged future. But it came with an asterisk: the researchers implanted tiny markings in the pig’s intestines that emitted near-infrared light and helped control the robot’s movements.

The method is far from practical as the markers cannot be easily implanted or removed. In recent years, artificial intelligence researchers have greatly improved the performance of computer vision, allowing robots to perform surgical tasks on their own without such markers.

Change is driven by so-called neural networks, mathematical systems that can learn skills by analyzing large amounts of data. For example, by analyzing thousands of cat photos, a neural network can learn to recognize a cat. Similarly, a neural network can learn from images captured by surgical robots.

Surgical robots are equipped with cameras that record three-dimensional videos of each operation. The video is streamed into a viewfinder, where surgeons look into as they lead the operation and observe from the robot’s point of view.

After that, however, these images also provide a detailed roadmap showing how operations are performed. You can help new surgeons understand how to use these robots, and they can train robots to do tasks on their own. By analyzing images that show a surgeon guiding the robot, a neural network can learn the same skills.

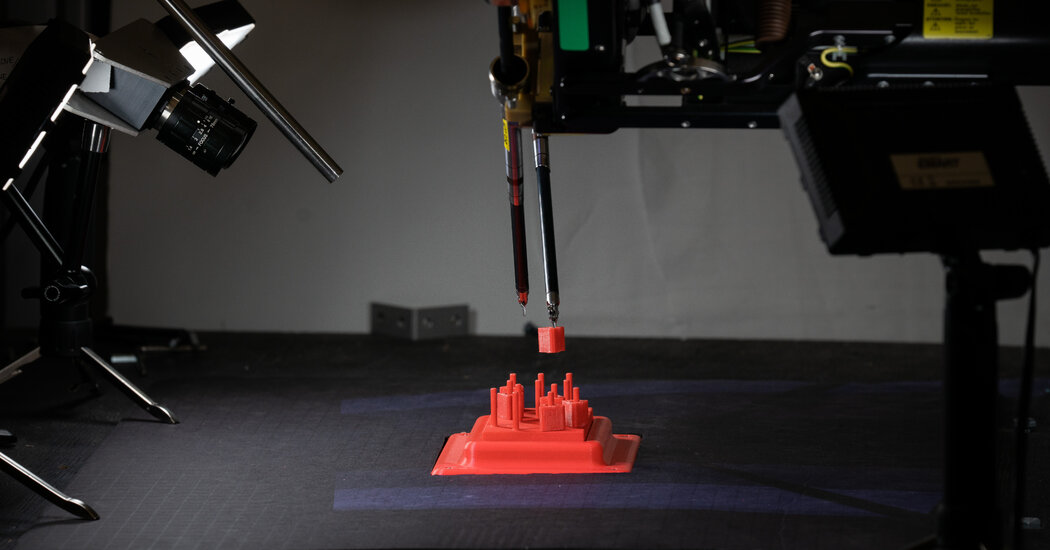

In this way, Berkeley researchers have worked to automate their robot, which is based on the da Vinci Surgical System, a two-armed machine that allows surgeons to perform more than a million procedures annually. Dr. Fer and his colleagues collect images of the robot that moves the plastic rings under human control. Then your system learns from these images by pointing out the best ways to grip the rings, guide them between claws, and move them onto new pens.

However, this process was marked with its own asterisk. When the system told the robot where to go, the robot often missed the spot by millimeters. Over the months and years, the many metal cables in the robot’s twin arms stretched and bent in small ways so that its movements weren’t as precise as they needed to be.

Human operators could unconsciously compensate for this shift. But the automated system couldn’t. This is often the problem with automated technology: it struggles to deal with change and uncertainty. Autonomous vehicles are still a long way from being widespread as they are not yet nimble enough to cope with the chaos of the everyday world.

The Berkeley team decided to build a new neural network that would analyze the robot’s errors and learn how much precision it was losing every day. “It learns how the robot’s joints develop over time,” said Brijen Thananjeyan, a PhD student on the team. Once the automated system could accommodate this change, the robot could grab and move the plastic rings, which was what human operators could do.

Other laboratories try different approaches. Axel Krieger, a Johns Hopkins researcher who was part of the Pig Seam Project in 2016, is working on automating a new type of robotic arm, one with fewer moving parts that is more constant than the type of robot used by the Berkeley team becomes . Researchers at the Worcester Polytechnic Institute are developing methods for machines that will allow them to carefully guide surgeons’ hands as they perform certain tasks, such as: B. inserting a needle for a cancer biopsy or burning it into the brain to remove a tumor.

“It’s like a car where the lane following is autonomous, but you still control the gas and the brakes,” said Greg Fischer, one of the Worcester researchers.

Scientists realize that there are many obstacles ahead of us. Moving plastic pens is one thing; Cutting, moving and sewing meat is another. “What happens if the camera angle changes?” said Ann Majewicz Fey, an associate professor at the University of Texas, Austin. “What if smoke gets in the way?”

For the foreseeable future, automation will be something that works with surgeons rather than replacing them. But even that could have profound implications, said Dr. Fer. For example, doctors could perform operations over distances well beyond the width of the operating room – perhaps several kilometers or more – to help wounded soldiers on distant battlefields.

The signal delay is too long to currently allow this. But if a robot could do at least some of the tasks on its own, remote surgery could become profitable, said Dr. Fer: “You could send a high-level plan and then the robot could execute it.”

The same technology would be essential for remote operation over even greater distances. “If we humans operate on the moon,” he said, “surgeons will need entirely new tools.”